share

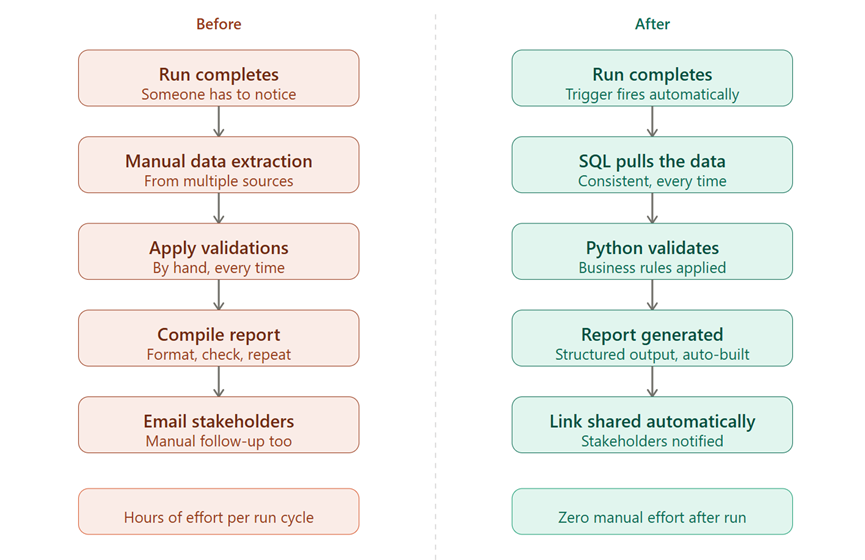

If you haven't read my last post on automating post-run reports, the short version: we went from manually preparing reports to a system that pulls data, generates the report, and shares the link —automatically.

Read the blog here!

But here's the thing — we had a similar problem in another project, and it happened during the UAT phase. Read on to see how we approached the problem by recycling the existing framework and building an automated routine so clean, that even an intern or anyone new to the process could easily run and generate UAT reports.

UAT IS ITS OWN KIND OF REPETITIVE

In every UAT cycle, multiple scenarios to validate. Same checks, different datasets. Reruns, follow-ups, status chasers. No single task is hard. But together, they consume days. Our instinct was to build something new. Purpose-built for UAT. Start from scratch. Then someone asked the right question: do we actually need to start over? The framework was already there.

What we had: SQL for logic, Python to stitch it together, a clear flow from data to output, that's not a one-off script. That's a pattern. And patterns are meant to be reused. So instead of rebuilding, we asked: what needs to change for UAT, and what can stay exactly the same? That shift in framing made everything faster.

THIS IS WHERE AI STOPPED BEING A BUILDER AND BECAME AN ADAPTER.

UAT automation became something that we need not build from scratch. I fed our existing logic into AI, explained what was different about UAT, and asked it to help me adapt. And it did. SQL queries were modified for new validation scenarios. Python scripts were adjusted to handle the parts of UAT that needed a slightly different flow. Where full automation wasn't possible — because of environment constraints, we added some manual checkpoints and kept moving.

AI wasn't just a tool for building things. It also became a customisation engine for things we'd already built. That's an important distinction. Functional professionals especially, we tend to think of AI as something that builds from zero. But adapting existing logic? That's often where the real value is.

WHAT THE SOLUTION LOOKED LIKE

Let me be straightforward about this: it wasn't a fully automated system, but the outcome perfectly fit our needs to reduce time and manual efforts of the team during a crucial phase like UAT. What was automated and still made a huge impact on saved time was: data extraction, repetitive validations, structured output generation. The heavy, soul-crushing, same-thing-every-time work. What stayed manual: certain business validations, environment-specific checks, final interpretation. The parts that genuinely needed a human. This balance worked because it was not just elegant but practical.

THE PART I DIDN'T EXPECT

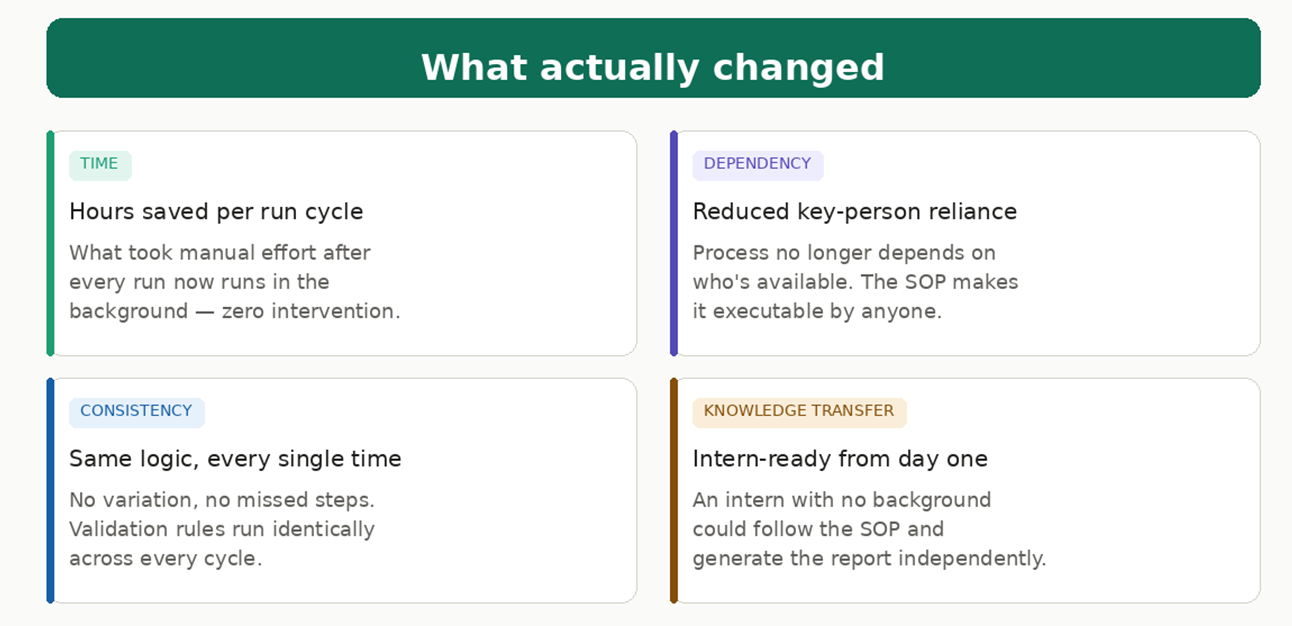

The biggest win wasn't the time saved by our team. It was what happened when we documented the process as an SOP.

An intern — no prior system background, no context on what we were doing — could follow it and generate the report.

That's not a small thing. That's knowledge transfer without a knowledge-transfer session. That's reduced dependency on the two or three people who "know how it works." That's a process that can survive someone going on leave.

And if it works for UAT, it works for other teams running similar checks. The same framework, tweaked again, deployed elsewhere. The time savings start to compound.

SO WHAT'S THE ACTUAL TAKEAWAY HERE?

Don't rebuild what you can adapt. Your first automation or framework, however small, is the hardest one. After that, you have a base and a pattern. And with AI, adapting that pattern for a new use case is far less effort than starting fresh.

For functional professionals, this is genuinely exciting. You don't need to think like a developer to do this. You need to think clearly about your process, describe it well, and trust that AI can handle the translation.