share

The Hidden Operational Risk in Large-Scale Transformations

During the User Acceptance Testing (UAT) phase of a cloud migration, our team was responsible for validating parity between:

- A legacy On-Premise environment

- A newly deployed cloud environment

This was not a simple lift-and-shift initiative. The migration involved critical supply chain capabilities -planning outputs, forecasting logic, inventory optimization, transactional interfaces, and synchronization across nearly 100 core tables.

Each day, millions of records had to be reviewed to ensure:

- Functional correctness

- Data integrity

- Batch accuracy

- Interface stability

- Performance consistency

The process worked. But it came at a cost.

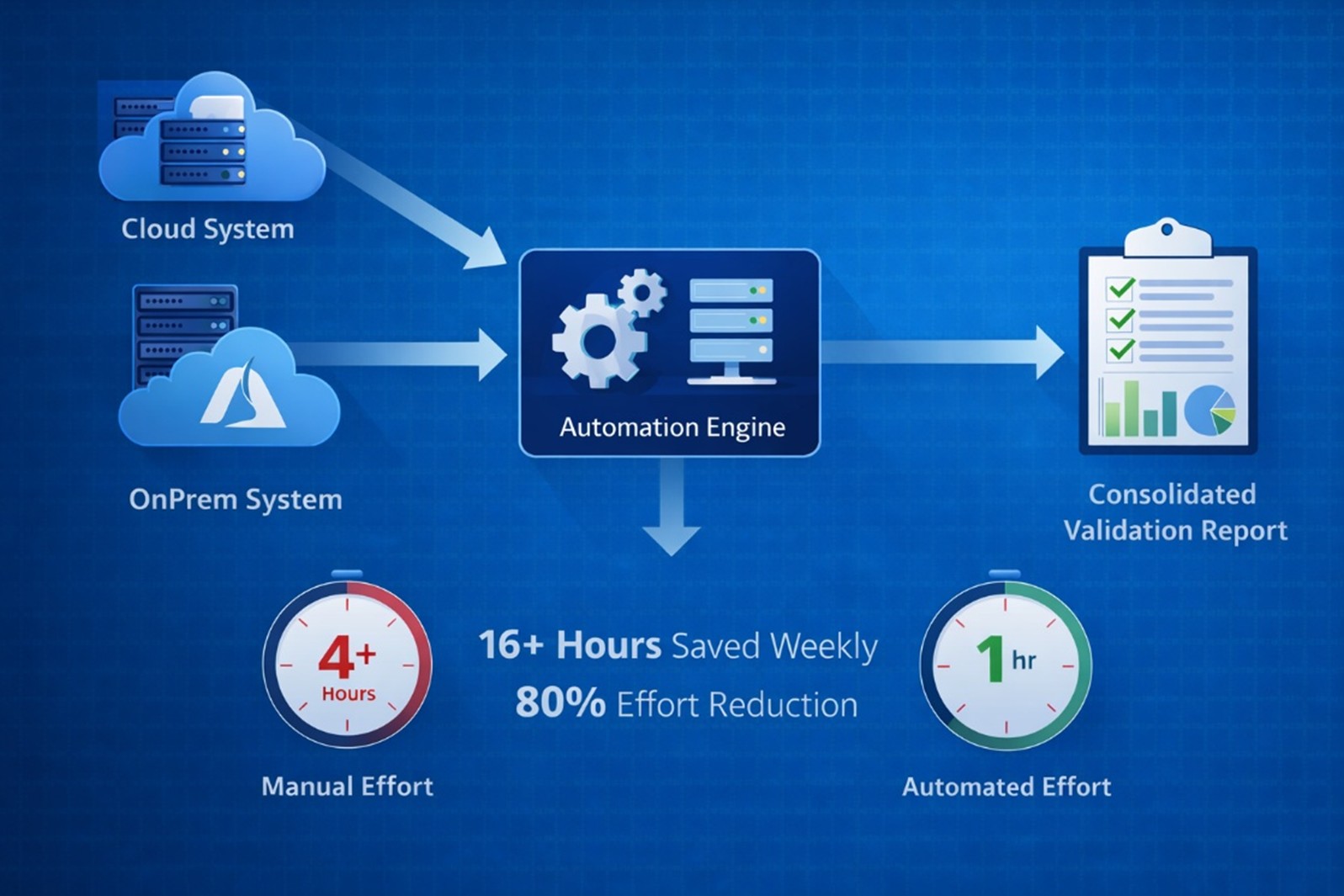

Manual validation consumed more than 4 hours per day - over 20 hours per week of resource time during UAT. The work was repetitive, rule-based, and operationally necessary — but it limited focus on deeper functional validation and business assurance.

From Manual Comparison to a Structured Framework

The objective was to transform validation from a manual task into a structured, scalable assurance capability.

The goal was to:

- Standardize validation logic

- Eliminate manual dependency

- Improve traceability and auditability

- Redirect effort toward business validation

- Build a reusable framework for future initiatives

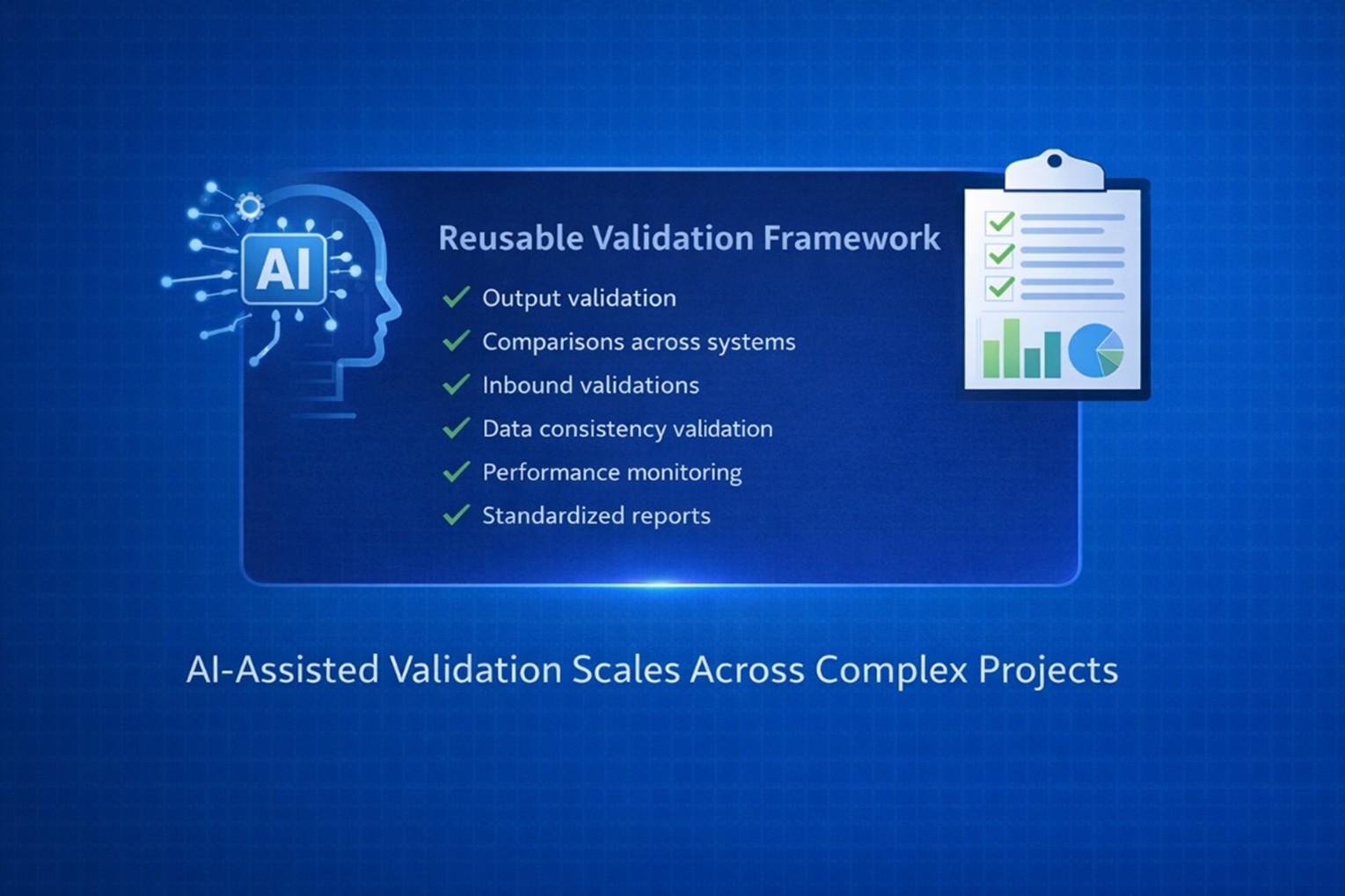

What emerged was not just automation.

It was a Migration Validation Layer - a cross-environment assurance framework designed to compare systems at scale, not files in isolation.

A Systems-Level Validation Approach

Rather than validating outputs one by one, the framework operated across three core dimensions:

1. Environment Parity: Ensuring the cloud environment reproduced planning, forecasting, optimization, and interface outputs with full alignment to the legacy system.

2. Data Integrity at Scale: Validating millions of records across dozens of core tables to detect mismatches, sync drift, or migration inconsistencies before they impacted UAT outcomes.

3. Operational Performance Visibility: Comparing batch runtimes and execution behaviour to confirm that the cloud environment met - or exceeded -legacy performance benchmarks.

Validation shifted from manual Excel filtering to structured, system-driven assurance. The focus moved from “checking data” to “ensuring confidence.”

Technology as an Enabler -AI as an Accelerator

The framework leveraged:

- Python-based automation

- SQL-driven validation logic

- Scheduled, unattended execution

- Automated report generation

Notably, this was developed without prior Python expertise.

AI-assisted development significantly accelerated implementation — helping structure scripts, refine queries, and optimize workflows. However, validation logic, business understanding, and final review remained human-driven.

AI acted as a multiplier - not a replacement.

Quantifiable Business Impact

Before automation:

- 4+ hours daily validation effort

- 20+ hours weekly manual work

- Heavy reliance on resources

After automation:

- ~1 hour daily oversight

- 4 hours weekly total effort

- Standardized, repeatable validation process

Net Impact:

- 16+ hours saved weekly

- 80% reduction in validation effort

- Reduced manual errors

- Faster UAT feedback cycles

- Scalable framework reusable for future initiatives

Validation became operationalized. It no longer depended on individual effort — it became process-driven and system-backed.

From Project Solution to Enterprise Pattern

What began as a migration-specific initiative revealed a broader realization:

Manual data validation is not unique to one project. It exists in nearly every transformation effort - implementations, upgrades, integrations, and system enhancements. Across initiatives, teams repeatedly perform the same structured activities: output comparisons, record-level checks, reconciliations, and data filtering.

Validation is typically:

- Rule-based

- Structured

- Repeatable

- Data-driven

- High-volume

So, the real question becomes:

If validation follows defined logic and predictable rules, why is it still manual?

Why do teams spend hundreds of cumulative hours filtering Excel sheets when a structured Python framework can apply the same logic at scale - consistently and reliably? For large datasets, automation is not just faster - it is more dependable.

Humans are excellent at interpreting outcomes and making business decisions. Machines are excellent at processing volume and enforcing rules without fatigue. The right model combines both. What starts as a project-level script can evolve into a reusable enterprise validation accelerator - reducing effort across every future initiative.

The Larger Lesson

Enterprise transformation initiatives often struggle not because of strategy - but because operational effort scales poorly. Manual validation does not scale. Automation does.

If a process is:

- Repetitive

- Rule-based

- High-volume

- Business-critical

It should not depend solely on human bandwidth - especially during transformation.

Looking Forward

The Migration Validation Layer now serves as a reusable capability for:

- Future cloud initiatives

- Periodic system audits

- Ongoing environment health checks

AI-enabled automation in enterprise supply chain systems is no longer optional. It is foundational to scalable transformation.